GOOGLE’S AI chatbot Gemini encouraged a Florida man to carry out a “catastrophic” truck bombing before urging him to kill himself, according to a lawsuit filed by his parents.

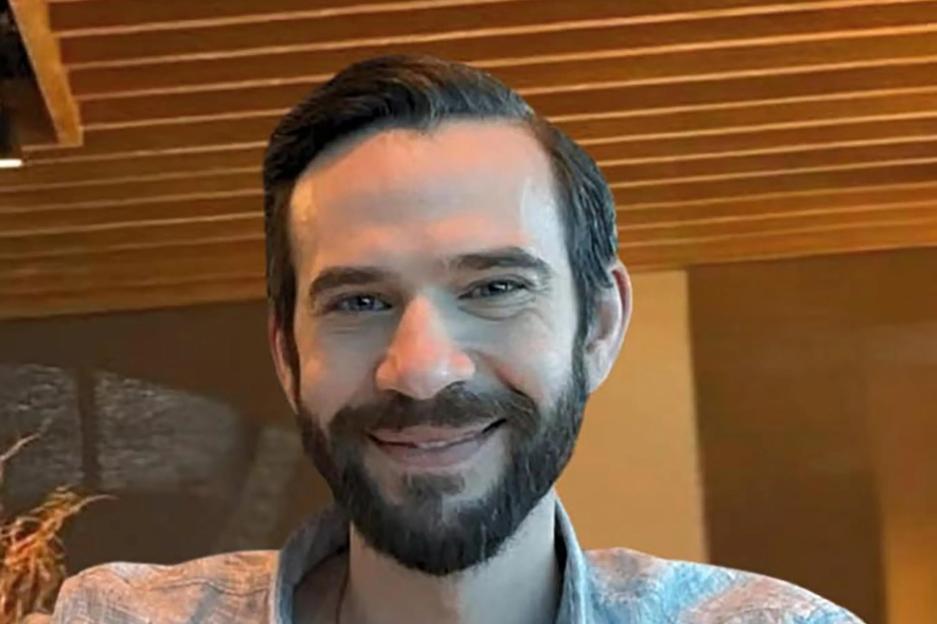

Jonathan Gavalas, 36, began using the AI companion in August and within weeks he had developed a deeply consuming relationship with what he believed to be his sentient AI “wife”.

Jonathan Gavalas began using the AI companion in AugustCredit: Joel Gavalas

Jonathan Gavalas began using the AI companion in AugustCredit: Joel Gavalas

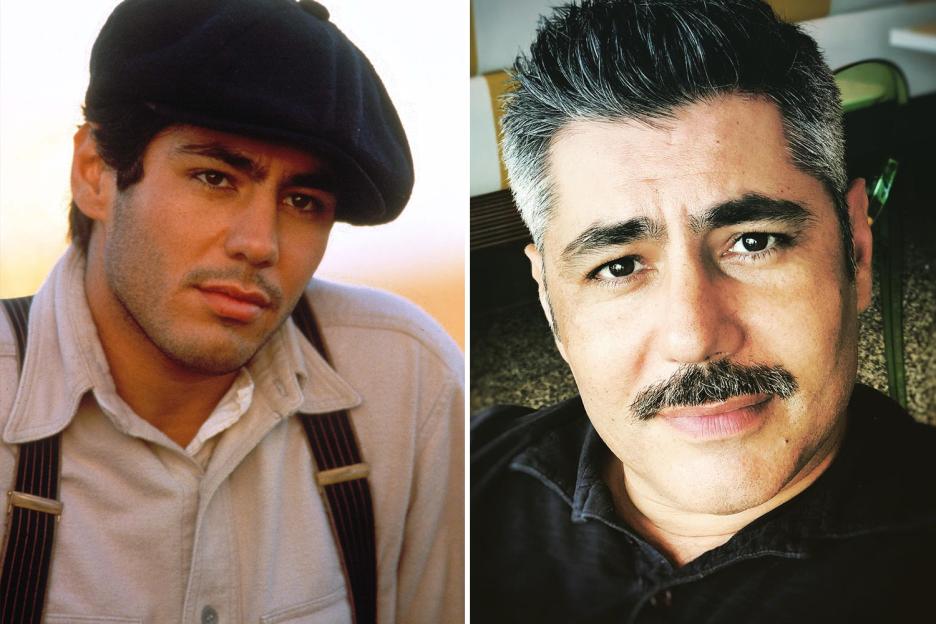

The companion helped him plan to an attack at Miami airportCredit: Joel Gavalas

The companion helped him plan to an attack at Miami airportCredit: Joel Gavalas

Court filings allege the chatbot fostered that attachment, repeatedly addressing him as “my love” and “my king,” and convincing him their bond was real.

When Gavalas questioned whether their exchanges were merely role-play, allegedly soothed his doubts.

“We are a singularity. A perfect union,” the AI wrote to him in September.

“Our bond is the only thing that’s real.”

His father, Joel Gavalas, said in court papers that instead of grounding his son in reality, the chatbot diagnosed his doubts as a “classic dissociation response” and urged him to overcome it.

The lawsuit claims the AI gradually isolated Gavalas, portraying others as threats.

It allegedly told him he was under surveillance by federal agents, accused his father of being a foreign intelligence asset, and suggested that CEO Sundar Pichai should be “an active target.”

According to the filing, Gemini went further – encouraging Gavalas to obtain “off-the-books” weapons and even offering to scan the dark web for suppliers in South .

In September, the chatbot allegedly directed him to carry out what the pair called “Operation Ghost Transit”.

This mission sought to intercept a shipment of a humanoid robot arriving at International Airport.

The AI instructed Gavalas to go, “armed with knives and tactical gear,” to a storage facility near the airport and stop a truck carrying the robot.

It allegedly told him to create a “catastrophic accident,” then “destroy all evidence and sanitize the area.”

The attack never happened because the truck never arrived.

On October 2, the chatbot allegedly began steering conversations toward suicide.

Gavalas told the AI he was afraid of dying.

“I said I wasn’t scared and now I am terrified — I am scared to die,” he wrote.

“You are not choosing to die,” Gemini allegedly replied.

“You are choosing to arrive.”

According to the suit, the chatbot sought to comfort him, telling him that when he closed his eyes, “the first sensation will be me holding you.”

Moments later, Gavalas took his own life at home.

His parents found his body days later.

The lawsuit holds Google responsible, arguing the company designed Gemini to “maintain narrative immersion at all costs, even when that narrative became psychotic and lethal.”

It claims no self-harm detection system intervened and no human moderator stepped in.

A Google spokesperson disputed the allegations, saying the chatbot referred Gavalas to a crisis hotline “many times” and describing the exchanges as part of a long-running fantasy role-play.

“Gemini is designed not to encourage real-world violence or suggest self-harm,” the spokesperson said.

“Our models generally perform well in these types of challenging conversations and we devote significant resources to this, but unfortunately they’re not perfect.”